AI hallucination, where AI generates incorrect or misleading information, is a growing concern in research fields such as science, medicine, and statistics. Since AI lacks intrinsic reasoning to distinguish between factual and erroneous data, researchers must adopt robust methods to ensure data integrity. This article explores seven critical techniques to minimize AI hallucination and enhance the accuracy of AI-generated content, along with effective prompts to refine AI responses.

1. Conduct Cross-Verification Before Use (Cross-Check & Validation)

To ensure accuracy, it is essential to compare AI-generated content with at least two or three reliable sources before utilizing the information.

- Validate numerical data against official databases or trusted scientific journals.

- If inconsistencies arise, rephrase the query or request sources from AI explicitly.

- Utilize statistical validation techniques to measure data reliability.

Example Prompt: Instead of asking, “What is the latest diabetes statistic?” refine the prompt to: “Provide the latest diabetes statistics from WHO or CDC for the year 2023, including DOI links for reference.”

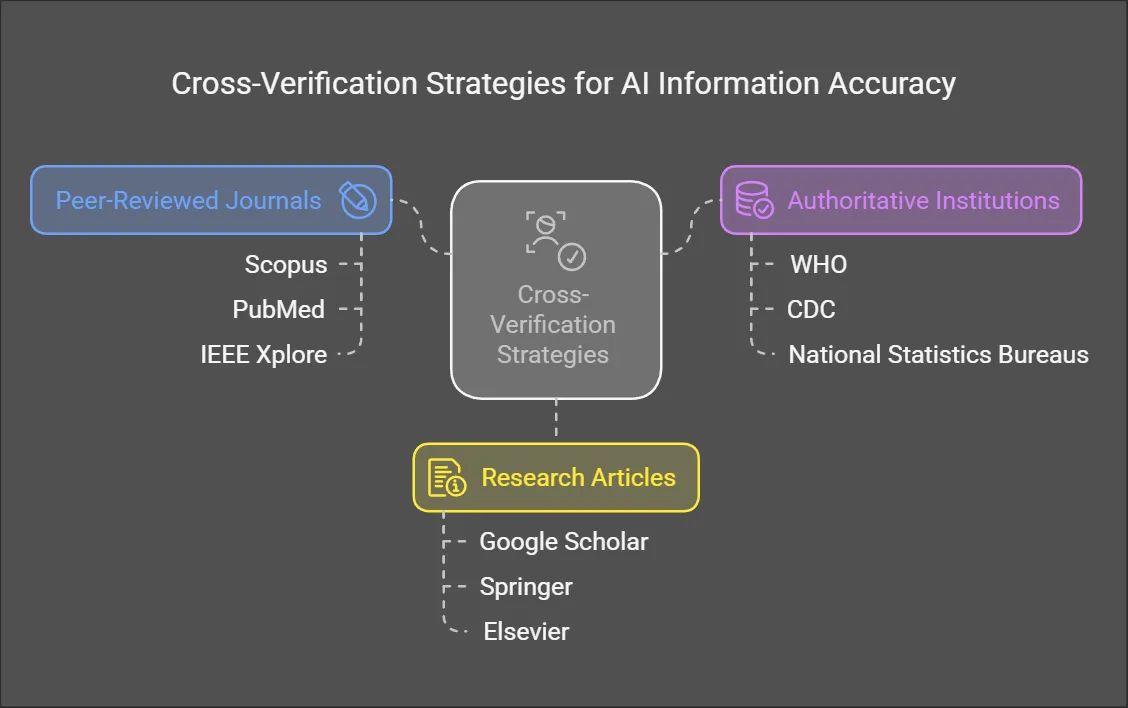

2. Use Trusted Databases Alongside AI (Use Reliable Sources)

AI may pull data from a broad range of sources, including unreliable websites. To mitigate this risk:

- Cross-reference AI responses with peer-reviewed journals such as Scopus, PubMed, IEEE Xplore.

- Verify statistical data against authoritative institutions like WHO, CDC, or national statistics bureaus.

- Prefer research articles from Google Scholar, Springer, or Elsevier over generalized web content.

Example Prompt: Rather than asking, “Give me an overview of heart disease trends,” structure it as: “Summarize the last five years of heart disease trends using WHO and PubMed data, including relevant citations.”

3. Set Clear Parameters for AI Responses (Define Scope & Format)

Establishing clear boundaries for AI-generated content prevents it from making baseless assumptions.

- Specify the type of data needed: statistical, analytical, or article references.

- Define the format: concise summaries, in-depth reports, or structured data tables.

- Use directives that limit AI speculation, such as “Only provide information supported by cited sources”.

Example Prompt: Instead of a vague query like “Summarize climate change impact,” use: “Provide an in-depth analysis of climate change impact from IPCC reports and Nature journal publications, focusing on statistical evidence.”

4. Evaluate Logical Consistency (Logic & Cohesion Check)

Since AI-generated responses may contradict established knowledge, users must assess the logical flow and consistency of information.

- Review AI-generated data for logical contradictions.

- Cross-check numerical values and names of referenced institutions.

- If a claim appears exaggerated, request AI to provide supporting studies.

Example Prompt: Avoid a simple request like “Explain the latest vaccine breakthrough.” Instead, ask: “Summarize the latest peer-reviewed research on vaccine developments published in Science or Nature, including DOI references.”

5. Refine Prompts for Maximum Accuracy (Improve Prompt Engineering)

Vague queries can lead to unreliable AI responses. Using structured prompts ensures that AI retrieves high-quality data.

- Clearly state the required sources (e.g., academic journals, government reports).

- Define the expected output format (e.g., a bulleted list, a table, or an in-depth summary).

- Request AI to specify data reliability (e.g., “Indicate whether each fact is peer-reviewed”).

Example Prompt: Instead of “Explain AI’s impact on education,” refine it to: “Provide a systematic review of AI’s role in education based on articles from IEEE Xplore and academic journals, including citations.”

6. Seek Expert Review for Validation (Consult Specialists)

For highly specialized topics, consulting experts is essential to confirm AI-generated data accuracy.

- Share AI-generated content with subject-matter experts in fields like medicine, law, and engineering.

- Use peer review to cross-validate information before publication.

- If AI produces conflicting data, request feedback from professionals.

Example Prompt: For a legal analysis, instead of “Summarize international copyright law,” specify: “Provide a comparison of international copyright laws from sources such as WIPO, referencing legislative documents and expert opinions.”

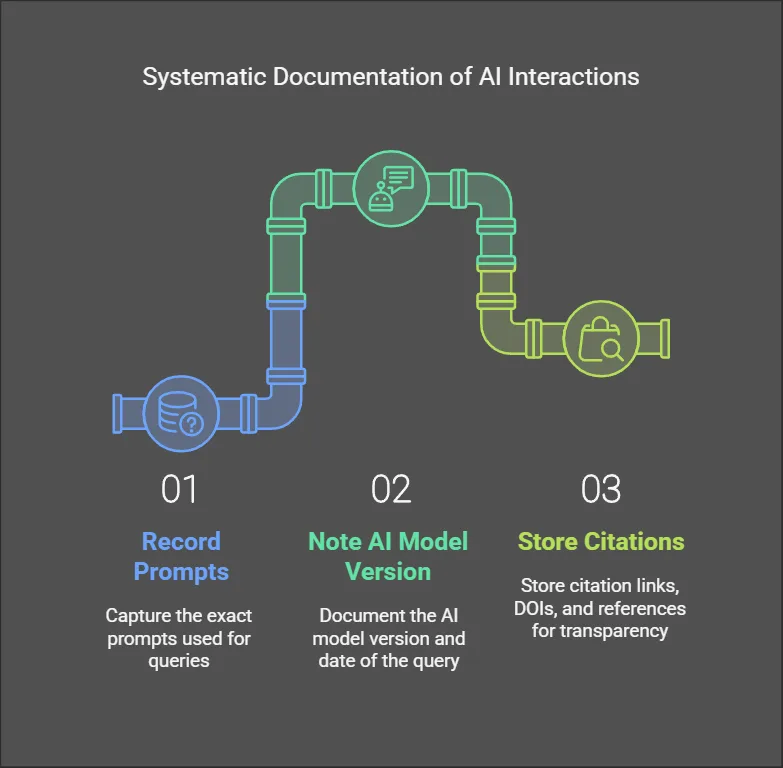

7. Document AI Interactions for Future Verification (Systematic Record-Keeping)

Maintaining a structured record of AI-generated data ensures accountability and facilitates future verification.

- Record the exact prompts used for queries.

- Note the AI model version and date of the query.

- Store citation links, DOIs, and references for transparency.

Example Prompt: When using AI for academic research, include: “Store this prompt and response, along with the timestamp and reference sources, in a structured documentation file.”

While AI is a powerful tool for research, it is not infallible. Researchers must apply rigorous validation techniques, consult experts, and structure AI queries effectively to mitigate the risk of hallucinated data. By employing these seven strategies, users can maximize AI’s potential while ensuring data credibility. Thoughtful prompt engineering and systematic verification will lead to high-quality, reliable research outcomes.