Have you been keeping up with the latest AI releases? If so, you’ve probably heard about Claude 3.7, Anthropic’s newest AI model that’s making waves in the tech community. As someone who’s been closely following AI developments, I can tell you that Claude 3.7 isn’t just another update – it’s a complete game-changer in the world of artificial intelligence.

What Makes Claude 3.7 Special?

Claude 3.7 AI introduces something truly innovative: a hybrid reasoning system. Unlike previous AI models that forced you to choose between speed and depth, Claude 3.7 can think in two different modes – fast and deep – all within a single model. This means you get quick answers when you need them and thorough, step-by-step thinking when faced with complex problems.

Remember Claude 3.5 Sonnet? While it was impressive, Claude 3.7 takes things to a whole new level. It’s smarter, faster, and much more capable across a wide range of tasks. The AI community has been buzzing with excitement since Anthropic first hinted at this new hybrid reasoning approach.

As a frequent user of AI for coding assistance, I couldn’t wait to see how Claude 3.7 would change my workflow. Would it really deliver improved coding skills? Would the extended thinking mode actually make a difference for complex problems? And what about the new CLI tool called Claude Code that promised to transform development tasks?

Even more surprising – Anthropic decided not to charge extra for these upgrades. Same pricing, more power. An AI that’s stronger without costing more? No wonder everyone was counting down to the release date!

With competitors like OpenAI’s O3 Mini and DeepSeek R1 also making headlines, Claude 3.7 had a lot to prove. So I did some research to see what’s new, how it performs, and what real users are experiencing with it in actual coding scenarios.

New Features in Claude 3.7 AI

Let’s dive into what makes Claude 3.7 special. Anthropic has packed this update with features that make it incredibly useful for developers and AI enthusiasts alike.

Extended “Thinking” Mode (Hybrid Reasoning)

This is the standout feature everyone’s talking about. Claude 3.7 AI can switch between giving quick answers and doing deep, methodical reasoning – all within the same model.

Think of it like having two gears: a fast mode for simple questions and a slow, thorough mode for tough problems. As a user, you can control how much time (or tokens) Claude spends “thinking” about a problem.

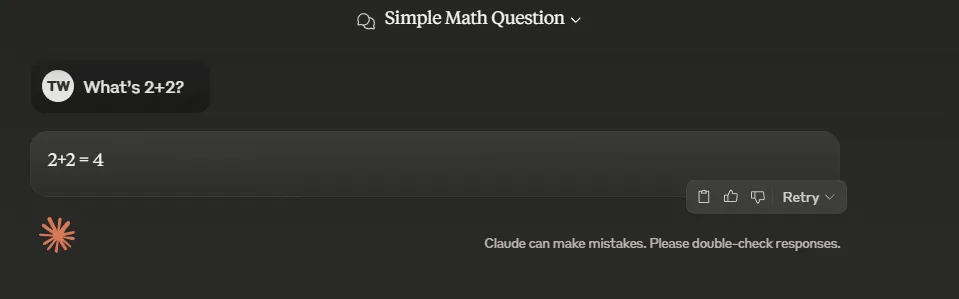

For example, when I ask Claude 3.7 a complex coding question, I can allow it to use extended thinking mode to reason through the problem step by step. But for a simple question like “What’s 2+2?”, it answers immediately.

I tested this against O3 Mini and Grok. OpenAI’s model took 2-3 seconds, while Grok and Claude 3.7 answered almost instantly. Claude 3.7 even recognized it as a “simple math question.”

This hybrid approach solves a major pain point with earlier AI models, which often made you choose between speed and depth. Now you get both when you need them.

Just remember that using the deep thinking mode takes longer and uses more tokens, so it’s best saved for when you really need it.

Claude Code – The Game-Changing CLI Assistant

Claude Code is perhaps the most exciting new tool for developers. It’s a command-line interface (CLI) that lets you interact with Claude 3.7 directly through your terminal for coding tasks.

This means Claude can act like a developer’s assistant – editing files, running tests, debugging code, and even committing to GitHub – all through simple CLI commands.

Imagine saying “Hey Claude, open my app.js and optimize the search function” and watching it happen. Or asking Claude to run your test suite and fix any failing tests. It feels like having a super-smart junior developer who never gets tired.

I tried Claude Code for a simple refactoring task in one of my Python scripts. Not only did it provide updated code, but it actually executed the code to verify everything worked (under my supervision, of course).

Having an AI directly working with code on my machine feels almost like science fiction. Claude Code is currently in limited preview, so not everyone has access yet. It works under human oversight (thankfully!), meaning you still review and approve its changes before they’re applied.

While it’s just a glimpse of the future of AI-assisted development, it’s incredibly promising.

Improved Coding Abilities

Beyond the fancy new CLI, Claude 3.7 AI is generally much better at coding tasks than previous versions.

Anthropic has made significant improvements under the hood so that Claude understands programming contexts more deeply. It follows coding instructions better, documents its logic more clearly, and catches its own mistakes.

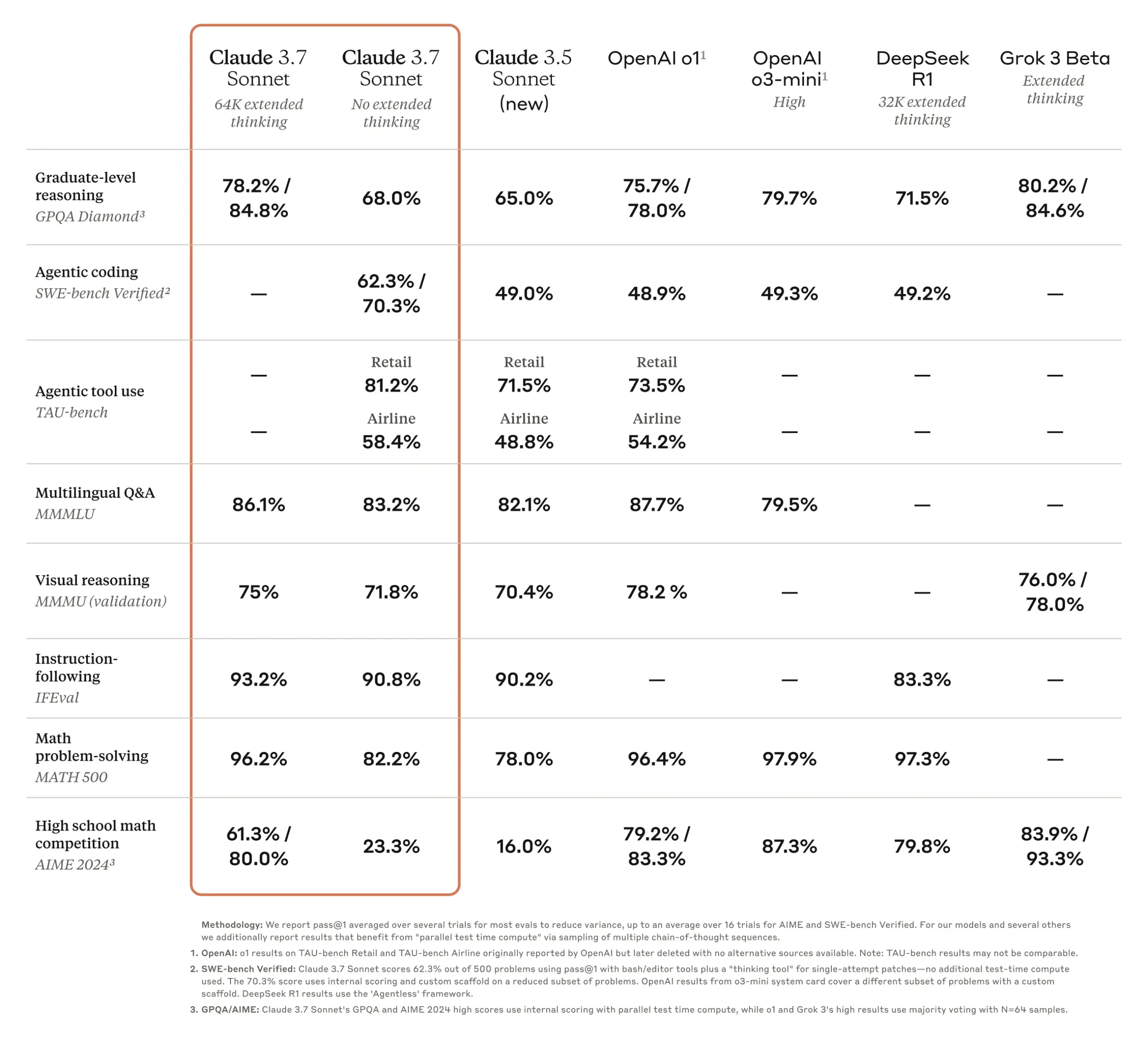

Source: anthropic.com

In my experience using Claude 3.7 through Windsurf (comparing it to 3.5), it consistently generates cleaner code with fewer errors. Early testers noticed it handles full-stack development tasks with fewer problems – everything from front-end components to back-end logic.

Anthropic reports that partner companies found Claude 3.7 to be “best-in-class” for real-world coding, handling complex codebases and tool use better than competing models.

Working with Claude 3.7 feels like pair programming with a knowledgeable (and incredibly fast) colleague. I even threw an entire multi-file project at it, and it kept track of the context much better than Claude 3.5 ever did.

Larger Memory (128K Token Context Window)

If you’ve ever been frustrated by AI models forgetting what you said just a few exchanges ago, you’ll love this upgrade. Claude 3.7 can handle 128,000 tokens of context.

In simple terms, it can read and remember extremely large documents or codebases. You could feed it entire libraries or huge code repositories, and it will still give coherent answers referencing all that information.

This is roughly equivalent to tens of thousands of words (think of a novel-length text). For developers, this means you don’t need to chop your code into pieces when asking for help – Claude can understand the whole project architecture or analyze a massive log file all at once.

I tested this by providing Claude 3.7 with a lengthy API documentation file (about 67 pages) plus some code. It was able to use both the docs and the code to answer my question about integrating a feature. That was truly impressive! Previous models would have struggled or required me to summarize the docs first.

One thing to keep in mind: just because it can handle 128K tokens doesn’t mean you should always max it out. Using the full context window can slow things down, so if you’re watching your time (or budget), you might want to be selective about how much information you provide.

Fewer Unnecessary Refusals

Anyone who’s used AI knows the frustration of hearing “I’m sorry, I can’t do that” for perfectly reasonable requests. Claude 3.5 was sometimes overly cautious, refusing requests that were actually fine.

Claude 3.7 improves dramatically in this area – Anthropic reports 45% fewer unnecessary refusals compared to 3.5.

In practical terms, 3.7 is less likely to reject or warn about benign queries. I noticed this almost immediately with certain coding tasks that might contain trigger words like “execute” that made older models hesitate. Claude 3.7 handled them without a fuss, while still correctly refusing truly inappropriate requests.

This quality-of-life improvement makes the AI feel less paranoid and more helpful, without compromising its safety guardrails.

These are the major improvements, but there are many smaller tweaks as well: better instruction-following, improved handling of structured data and long-form text, and a more nuanced understanding of what users actually want. Basically, Claude got smarter and more personable in this version.

To sum up, Claude 3.7 AI is packed with features designed to solve real problems that users had with previous models. But do these features actually translate to better performance? Let’s look at how it compares to the competition.

How Claude 3.7 AI Compares to Competitors

All the new features in the world don’t matter if Claude 3.7 can’t outperform its rivals. The good news? It’s seriously impressive in benchmark tests, often outclassing competing models by significant margins.

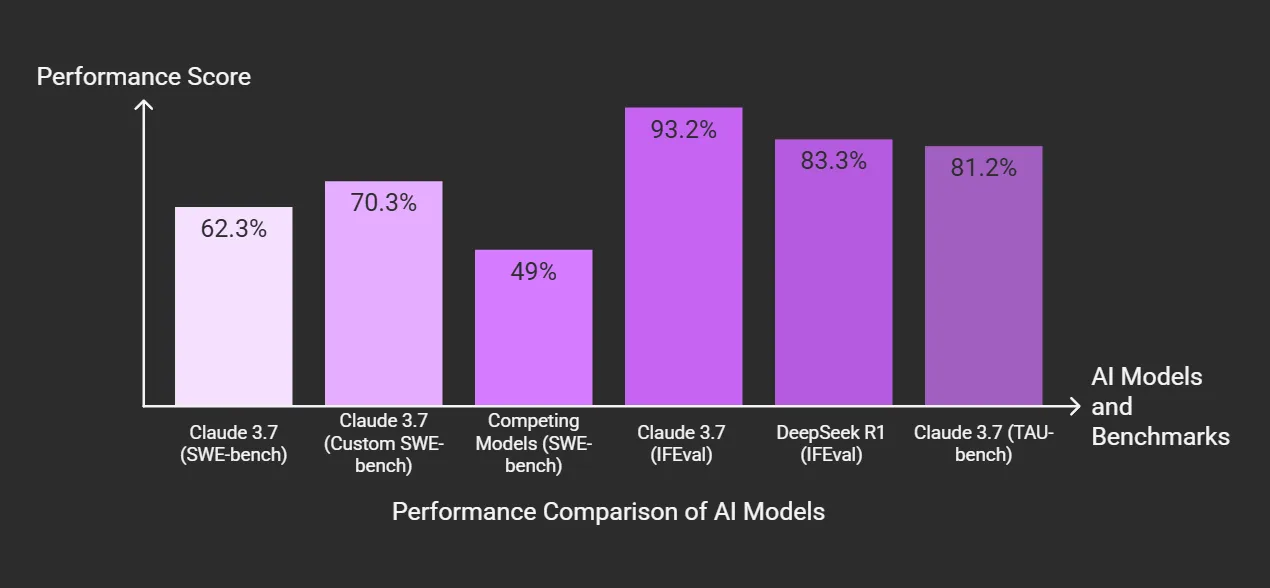

On a standard software engineering benchmark (SWE-bench), Claude 3.7 achieved about 62.3% accuracy (and up to 70.3% with some custom prompt scaffolding). In comparison, other competing models were around the 49% mark. That’s a huge difference – practically a generational leap forward.

When I first saw those stats, I was shocked. Being a few percentage points better would be impressive enough, but being 13 points higher in accuracy is remarkable in AI benchmarking terms.

Claude 3.7 also excels at following instructions and general reasoning. One evaluation (IFEval) measuring how well models follow instructions found Claude 3.7 scoring 93.2% (with extended thinking mode on) versus 83.3% for DeepSeek R1.

In simple words, Claude does a better job understanding and executing what you ask compared to DeepSeek’s model, which had been gaining attention for being highly efficient given its low training cost.

What about OpenAI’s models? Claude 3.7 clearly aims to surpass OpenAI’s latest offerings. OpenAI’s O3 Mini was introduced as a fast and cost-effective model – kind of like a “little sibling” to their more powerful options.

O3 Mini excels at quick responses and is very affordable, but it doesn’t match Claude 3.7’s capabilities on complex tasks.

The AI community was recently debating O3 Mini versus DeepSeek R1 as representing a trade-off: O3 Mini was faster with more structured answers, while DeepSeek provided more detailed, step-by-step solutions. You essentially had to choose between a quick answer or a thorough one.

Claude 3.7 says “why not both?” with its hybrid reasoning approach. It delivers fast results when you need speed and can slow down for deeper analysis when you need thoroughness – all in one model. This flexibility means it often outperforms O3 Mini and DeepSeek R1 in their respective specialties.

In real-world coding tests, companies reported that Claude 3.7 was “best-in-class” for coding tasks, handling complex codebases and tool use better than other models they tried. This suggests that even against OpenAI’s and others’ best efforts, Claude’s new version leads the pack for tough coding challenges.

Anthropic notes that Claude 3.7 achieved state-of-the-art performance on coding benchmarks like SWE-bench and a tool-use benchmark (TAU-bench), outperforming both Claude 3.5 and OpenAI’s models.

On a tool use benchmark for AI agents (tasks like booking flights or handling web tasks), Claude 3.7 scored 81.2% on a retail task, beating both its previous version and OpenAI’s model.

To be fair, no model wins at everything. Elon Musk’s xAI has a model called Grok (v3) that apparently dominates in pure math competitions (scoring higher on a high school math contest called AIME). Claude 3.7 wasn’t the top performer there – Grok 3 beat it in that specific area.

But for the things developers care about daily – coding, debugging, system integration – Claude 3.7 seems to have the edge. It’s like how one car might win a drag race but another is better on a road course; different models have different strengths. For coding “road courses,” Claude 3.7 is the new champion.

What impresses me most is the consistency of Claude 3.7’s performance. Some models show great results in benchmarks but disappoint in practice. With Claude, the benchmark wins align with my personal experience using it. It’s consistently good at what it does, which gives me confidence in relying on it for important tasks.

To summarize: Claude 3.7 AI generally outperforms OpenAI’s O3 Mini and DeepSeek R1 in complex reasoning and coding tasks, thanks to its hybrid thinking ability and overall upgrades. It offers both the speed that O3 Mini specializes in and the detailed approach that DeepSeek was known for, making the “speed vs depth” trade-off much less of an issue.

Cost vs. Value of Claude 3.7 AI

We’ve covered how impressive Claude 3.7 is, but what about the cost? Is the performance boost worth the price?

As someone with a limited budget, I care a lot about getting good value for my money. The good news is that Anthropic kept the pricing for Claude 3.7 the same as the previous version – there was no price increase for all these new features.

As of its release, Claude 3.7 costs $3 per million input tokens and $15 per million output tokens on the API. This includes the tokens used in the extended thinking mode.

If you were already using Claude 3 or 3.5, you’re paying the same rate but getting a better model – essentially a free upgrade in performance. On the consumer side, Claude is available in a limited free tier and in paid Pro/Team plans, and these prices didn’t change with the 3.7 launch.

Anthropic clearly wanted to make it easy for users to switch to 3.7 without any financial barriers.

The $15 per million output tokens price point is comparable to other high-end models (similar to what OpenAI charged for some GPT-4 tiers). It’s not the cheapest option available.

We’ve seen something of a price war recently: OpenAI’s O3 Mini came in at about $1.10 per million tokens (93% cheaper than their previous models), and DeepSeek R1 was ultra-affordable at around $0.14 per million tokens – practically free compared to the major players.

These low prices turned heads and put pressure on the industry. However, it’s important to note that these cheaper models don’t match Claude 3.7’s capabilities. O3 Mini is less expensive but also less powerful than GPT-4 or Claude, while DeepSeek is incredibly cheap but still a first-generation model with limitations. So it’s not a direct comparison with Claude 3.7.

In terms of value, Claude 3.7 justifies its cost if you actually use its strengths. If you’re a developer who will use the extended reasoning to solve complex bugs, leverage the massive context window to analyze entire projects, or benefit from the coding capabilities to save hours of time – then the productivity gains likely outweigh the token costs.

Think of it this way: if Claude saves you even a couple of hours of work, that’s worth more than the few dollars of token costs you might spend.

On the other hand, if your needs are very basic – like simple Q&A or tasks that cheaper models can handle – you might not see enough benefit from Claude 3.7 to justify the higher price. For those simpler cases, a less expensive option might make more sense.

Personally, I’m thrilled to get a better Claude for the same price I was already paying. Also, making extended thinking an opt-in feature gives you control over how much extra computation you’re using. If I’m watching costs, I can choose to enable extended mode only when absolutely necessary, keeping token usage under control. (API users can even set a specific “thinking token budget” to balance cost versus quality, which is great for budgeting.)

What about the free tier? There is a free plan for Claude with daily message limits, and Claude 3.7 is available on all plans. However, the extended thinking mode is only available to paid users. That’s reasonable – the heavy reasoning presumably costs more to run, so they reserve it for paying customers.

This means you can still test Claude 3.7’s normal mode at no cost and see the general improvements. For many hobby projects or casual uses, the free tier might be sufficient. If you find yourself needing the full power, upgrading to Pro (or using the pay-as-you-go API) would be the next step. And as we’ve seen, those prices are unchanged and comparable to other top-tier models.

To summarize: Claude 3.7 AI gives you more capability for the same cost, which is a great deal in today’s AI landscape. Everyone’s needs will be different, but I haven’t experienced any regret using Claude.3.7 – in fact, I’m pleased I didn’t have to pay more for this impressive new model.

Potential Drawbacks of Claude 3.7 AI

No AI system is perfect, and that includes Claude 3.7. To be fair and balanced, let’s look at some limitations and issues you might encounter.

Overthinking Simple Problems

The very feature that makes Claude 3.7 powerful – the extended thinking mode – can sometimes be too much of a good thing. If you leave that mode on for every query, Claude might overanalyze simple questions and take longer than necessary to answer.

I experienced this myself when I accidentally used extended mode for an easy coding fix. Claude gave me a detailed explanation when a simple solution would have been enough.

It’s like having an extremely detail-oriented friend: great for difficult problems, but excessive for simple ones.

The solution is straightforward: use extended mode intentionally. You can toggle it off for straightforward tasks. Once I started doing this, I got quick answers when I needed them and deep analysis when appropriate.

Speed Trade-off

Related to the above point, when Claude enters deep reasoning mode, it’s slower. That’s expected – it’s literally thinking harder.

But it’s worth mentioning because if you’re used to near-instant responses, waiting a few extra seconds (or tens of seconds for really complex questions) might feel frustrating.

Personally, I think the wait is usually worth it when I see the quality of the detailed answers, but impatience is real. In comparison, OpenAI’s O3 Mini might give you an answer faster (since it doesn’t think as long), though that answer might be less thorough.

So you have a choice: wait a bit longer for a potentially better answer, or get a quick but possibly shallower response. Claude 3.7 lets you decide for each query, which is nice – just remember it will take longer in extended mode.

This isn’t a bug; it’s a feature. But impatient users might wonder, “Why is it taking so long to answer?”

Claude Code – Still New and Needs Supervision

While Claude Code is exciting, it’s a brand-new and somewhat experimental feature. It’s in limited preview, so not everyone can access it yet, and it might have some rough edges.

One obvious concern: it can execute commands on your behalf, which is powerful but potentially risky. I wouldn’t recommend letting it run freely in a production environment or on your only copy of important files.

Since it’s new, there might be bugs. Maybe it doesn’t handle certain file operations well, or it might get confused if your project has an unusual setup.

These issues will likely improve over time, but for now, it’s wise to be cautious. The last thing you want is your AI helper accidentally deleting the wrong directory because it misunderstood your instructions (this didn’t happen to me, but it’s a concern).

So use Claude Code in a safe environment until you build trust in it.

Not the Best at Everything

As impressive as Claude 3.7 AI is, it’s not the absolute master of all domains. As mentioned earlier, models like xAI’s Grok can outperform Claude in certain areas, such as complex math problems.

If you ask Claude to solve an extremely complicated math puzzle or something outside its strengths, you might not get an optimal solution.

It’s very good at reasoning and coding, but I noticed that for highly specialized tasks or detailed trivia, it’s not dramatically different from other top models. In creative writing, it’s excellent, but so are other models.

In short, Claude 3.7 is top-tier, but not a magic solution for everything. Keep your expectations realistic depending on the task.

Overall, Claude 3.7’s limitations are relatively minor. With a bit of patience and caution, you’ll be fine. Considering the complexity of what this model can do, it’s remarkably well-behaved. Just remember that it’s a tool – an extremely advanced one – but you’re still the one guiding it.

Final Thoughts on Claude 3.7 AI

Using Claude 3.7 has been like working with an enthusiastic, incredibly knowledgeable friend who’s always available to help. Sometimes it provides more detail than needed, and occasionally it needs some guidance, but overall it makes work more productive and enjoyable.

For anyone who deals with coding, large projects, or complex problems regularly, I highly recommend trying Claude 3.7. It might completely change how you work, in the best possible way.

The hybrid reasoning approach that combines quick responses with deep thinking is genuinely revolutionary. Being able to choose the right mode for each situation gives you flexibility that wasn’t possible with previous AI models.

Claude Code, while still in preview, shows the exciting future of AI-assisted development. Having an AI that can directly interact with your code through the command line opens up new possibilities for collaboration between humans and machines.

The improvements in coding ability, context window size, and overall intelligence make Claude 3.7 a powerful tool for developers. Whether you’re debugging complex systems, refactoring legacy code, or building new features, Claude can provide valuable assistance.

And the fact that Anthropic delivered all these improvements without raising prices makes Claude 3.7 an even more attractive option. You get significantly more capability for the same cost, which is rare in the tech world.

While no AI is perfect, and Claude 3.7 has its limitations, the benefits far outweigh the drawbacks for most users. With some understanding of when to use extended thinking mode and when to keep things simple, you can get the most out of this impressive new model.

The AI landscape is evolving rapidly, with new models and capabilities appearing regularly. But Claude 3.7 represents a significant step forward, combining the best aspects of fast and thorough AI systems into a single, versatile model.

If you’re already using AI for work or personal projects, upgrading to Claude 3.7 is an easy recommendation. And if you’re new to AI assistants, Claude 3.7 offers an excellent entry point with its combination of intelligence, flexibility, and user-friendly design.

The future of AI-assisted work looks bright, and Claude 3.7 is leading the way.